By Camryn Bowden

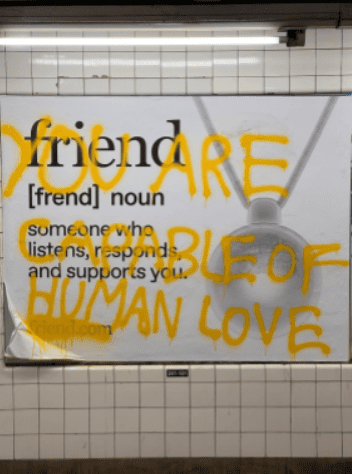

Artificial intelligence is a rapidly developing tool in many areas. Economically, it’s the focus of most high-tech companies. Culturally, it’s a major focus of the media industry and advertising industries. And, according to new reports, it’s also become a replacement for friendship—and in certain cases, mental health care.

Across the U.S., an estimated 60 million Americans suffer from mental illness, according to Mental Health America, a national, community-based nonprofit advocacy organization. With states varying in their access to mental health care, areas with a lack of support, structure and smaller population could see higher rates of AI use substituting for human-to-human contact and human therapy.

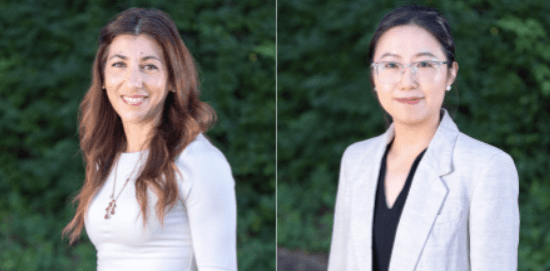

“AI is so accessible, it’s so less threatening … less anxiety-provoking for someone to reach out to,” said Dr. Suelle Micallef Marmara, an assistant professor in Hofstra University’s Mental Health Counseling Program.

The two lowest ranked states, Nevada and Arizona, have smaller populations, leading to concerns of isolation and potential inability to access mental health care. New York and Hawaii are the two best ranked states in the State of Mental Health in America report.

Marmara shared how AI has become more commonplace. “AI is very, very much a very new, new space, and people are very much experimenting with it,” she said. “From Covid times, we saw how the young generations relied more on AI and social media to fill that void of not being able to socially interact with others, which led to … struggles with relationships, interpersonal skills and emotional self-regulation.”

Dr. Anni Wang, another assistant professor in Hofstra’s Mental Health Counseling Program, shared her perspectives about AI use for mental health. “We can see some of the potential positive effects from AI, because people get this immediate support, immediate response, especially when they feel lonely,” she said. “We don’t know in the long term if we will continue to just receive this immediate, encouraging, responsive feedback. [How will that] impact people’s psychological development?”

According to Marmara, AI’s inability to truly empathize restricts its ability to fully replace a friend or therapist. “I don’t think AI can replace human connections, because we connect with each other more than from cognitive level,” she said. “We connect with each other from a deeper emotional level of understanding and sense of belonging.”

Younger generations, especially Generation Z (those born between 1997 and 2012), are among the first people to use artificial intelligence tools. Many, despite seeing some advantages to the chatbots, oppose use of AI if it replaces human connection.

Sophomore film and TV major Cassie Midgley said AI is helpful in editing papers or articles, but she does “not agree with using AI to, in a way, replace people and human interactions,” noting, “it’s artificial intelligence, and that way it creates an artificial relationship.”

“You can never actually replace a real human interaction, because there’s a sort of authenticity that you will never get in return,” she said.

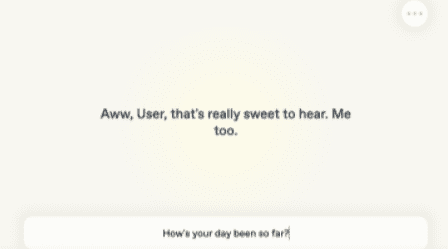

Sophomore TV production and studies major Summer Savaria said she has friends, and even family members, who have started to use ChatGPT in a therapy setting.

Savaria has a close relative who “has a therapist of her own,” she said, “… but she was using it almost to assist with therapy. Her therapist would be like, ‘Hey, you can talk to AI … Maybe ask it to reframe your problems in a more objective way.’”

Savaria, however, does view chatbots that replace human-to-human contact as dangerous. “I know a lot of people online use it for some kind of romantic replacement,” she said. “People who, you know, can’t get a date, people who can’t find that kind of love for themselves … They use AI as kind of an augment for that. It just saddens me in a way that they have almost given up hope.”

Sophia Barbera, a master’s candidate in Hofstra’s Mental Health Counseling Program, explained that overuse of artificial intelligence can cause some people to go into “AI psychosis.”

“I’m unsure if you’ve seen on social media, there was a case of this woman named Kendra who was talking to AI to justify her very unethical crush on her psychiatrist,” Barbera said. “The bottom line is that the AI works to interact with you how you want it to, and as such, it kind of was telling her what she wanted to hear, and therefore feeding into her delusions.”

“AI psychosis” has only recently emerged as a trend, but involves an obsession with artificial intelligence that further induces delusions or paranoia. This non-clinical diagnosis most often involves romantic attachment to AI, a kind of uncovering about the meaning of the world or the belief that AI is a god or deity.

This is one of the many negative mental health implications that can happen with mentally ill people, or those who overly rely on AI.

“You know how when you go to the doctor’s office, maybe you do a quick Google search of your symptoms, and you kind of make some grand assumptions about what you might have,” Barbera said. “I feel like that is something that’s kind of now resonating more within the mental health field, especially with AI. We’ve seen lots of errors within AI, so it’s scary to think that it can actively worsen the symptoms of a person who is struggling and feed into delusions if they are experiencing some kind of psychosis.”